Testing Activities

In my previous blog post Part 1, What is Testing, I talked about what I felt testing was about. In this second part of my series about testing and quality management, I get more specific about different types of testing and expand on models that help teams visualize testing so the whole team can participate in testing activities.

Testing isn’t ‘just’ about finding bugs. It’s also about discovering different kinds of information that enables us to identify risks. That information feeds into better decision making to mitigate those risks.

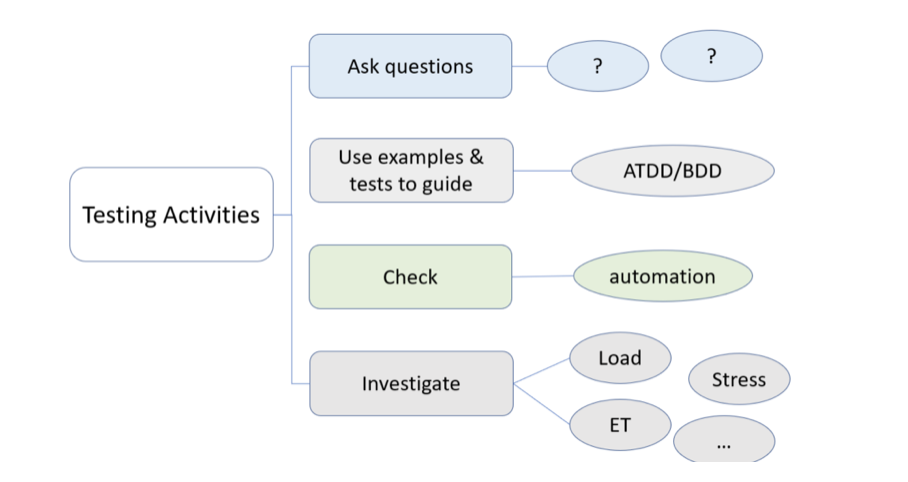

TYPES OF TESTING ACTIVITIES

There are different ways to categorize testing activities. This is one way.

Asking questions

Testers make great question askers (QA) because they think about the ‘what if’ questions that others may not. There are many good ways to ask questions, but that is a post all on its own. One thing to remember when asking questions, is to listen carefully to the answers. It is often too easy to say thank you without considering follow-up questions to clarify.

Also, ask open ended questions vs. ones requiring a yes/ no answer. For example, instead of saying, “Do we have to consider ‘X’ as a user of the system”, ask “Who will be using the system?” because that will allow you to clarify as you go.

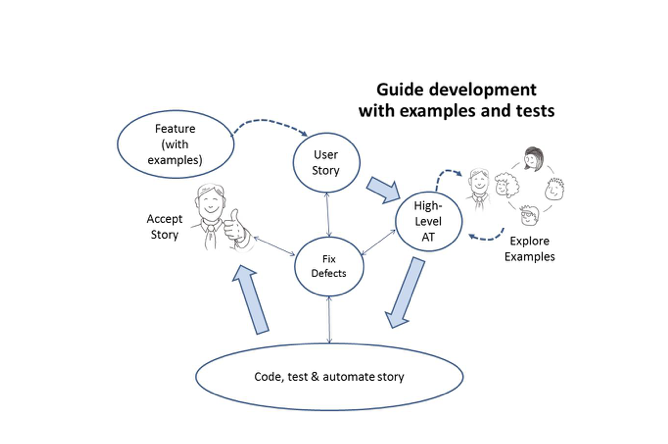

Guiding development

Acceptance test-driven development (ATDD) or behaviour-driven development (BDD) are two common approaches to guiding development with examples.

The team takes a story, has their discussions – perhaps a 3-amigos session going through examples to understand the story. They use these examples to write acceptance tests and have more conversations. As they learn more, they continue to write tests for business rules in an executable format against the API / service layer.

The programmer then takes these tests and can apply TDD, writing unit tests, then code, and refactoring as necessary until they pass. If the team works in this way, the tests at the unit level and the API are automated as the code is developed, and the checks are in place to make sure the system does what the team thinks it should do. The tester is guiding the automation using their test design skills, looking for the best tests – not only happy path tests, but also testing the misbehaviours, the what-if type scenarios.

Investigating

Of course, we also need to do investigative testing – human centric testing. Most testers get energized when they do investigative testing. They use their critical thinking skills and experience to find those more subtle bugs in the code that were missed earlier. Hopefully, if the team has been working on all the ‘early’ testing activities, there will be fewer and less critical bugs to find.

It is not enough to find the bugs and report them. We also need to communicate the risk, tell the testing story, influence by pitching ideas for solutions, speak with customers to understand their experiences and use the information from these conversations to feed ideas for improvement to the team.

Checking

I won’t get into the automation used in testing too much here, except to say automation for regression testing, is an activity for checking that the system does what it did yesterday. It is a great change detector because it does the same thing again and again the very same way – the stuff humans do not do well.

Automation is a tool to check for consistency, as well as for tracking and reporting results over time. When done well, it enables testers to focus on the human centric type of testing I talked about in the other three activity types.

AGILE TESTING QUADRANTS

If we look at the agile testing quadrants briefly, we can see that there are many types of testing. This diagram shows examples of test types that s a team may execute. It is far from complete, and every team’s context will be different so I recommend that teams use this as a thinking tool – a way to discuss what tests they might need. I talk about contexts a little late in this post.

The tests on the left-hand side are tests we run early – before the code is written. These help to expose risks and flush out misunderstandings. The misunderstandings are often the cause of defects. If we can eliminate some of the misunderstandings, we are preventing defects from finding their way into the code.

An example I use from my own experience:

I sat with the developer and told him all the API automated tests were checked in and ready to go. He checked them out from our version control, and looked at them and said, “These tests won’t pass.” I looked at him inquiringly because we were in all the meetings together, and we had sat together to put the test method in place. How could these tests not pass? He hadn’t written the code yet. As it turns out, we had a different understanding of how the search feature would work. It was after this particular issue came to light, that I started using more concrete examples in our discussions. But, bottom line – if I hadn’t written the tests first and we hadn’t looked at them together, he would have created code that would have had a major bug. By making it visible early, it was a simple matter to fix. No code had yet been written. I helped prevent a bug in the code.

Back to the quadrants. The right-hand side show tests that critique the product. The top-right are business facing tests, which means that the business can read and understand them. They may also be involved in executing them, for example – user acceptance tests. The bottom-right are the technology-facing tests which critique the product. This quadrant houses most of the tests for operational and deployment quality attributes. Even if the team has discussions early about what is important for the customer and the team, and how they might instrument the code to help, or consider ways to implement better for security, in my experience, we cannot execute the actual tests until code has been written. These investigative tests are not only about finding defects, but also about learning more about the application under test and giving feedback to the team.

TESTING IN DIFFERENT CONTEXTS

There are many types of testing and there are many contexts in which we test. When we combine these two aspects, one person cannot possibly know all there is to know.

Up until this point, I have not mentioned agile, or DevOps or continuous delivery or phased/gated (waterfall) methods, or any other kind of development process that your team may be using. In phased and gated projects, there is usually a test team that shares responsibility for testing the software that was delivered to them. Most of their testing is concentrated on those tests that find bugs.

My recent experience (the last 20 years) has been in teams that use short cycles, frequent deliveries, product teams with embedded testers, have the business working hand in hand with the delivery teams – in short, agile, DevOps, continuous delivery … whatever flavour you choose to call it. I’ll use the word agile here for simplicity. An embedded tester on a team cannot hope to do all the testing for a product. They cannot possibly have all the skills. They have their basic testing techniques, with some expertise in one or more specific techniques. They can learn and have deep knowledge about the domain. But, depending on the product, they may need to learn other skills as well – for example, strong database and data integrity skills are required if they are working with data warehousing.

There is not a one size fits all solution tester, so the responsibility for testing activities is shared by the team. The whole team is responsible for quality.

SUMMARY

Testing is about risk discovery and mitigation, whether you ask questions early in the feature cycle, define tests before coding to help guide development, investigate the product for the unknowns that we didn’t think about, or watching test results for trends that can be actionable to improve the product or the process quality. All these tasks help to build quality into the product, but they are not enough.

Testers have always had to coach, teach, influence, communicate, think laterally, think critically, pitch ideas for solutions to bugs/incidents, pitch ideas for new products to solve wider software and product solution problems, speak to customers and users about their experiences, use the information from those conversations to feed into development and testing ideas, manage test environments, get involved in release management, etc. The list is endless, but these things by themselves do not solve the quality problem.

In my next blog post, I’ll start exploring quality and what other things we need to consider.

For a bit more reading or different perspective, check out: https://www.eviltester.com/post/fundamentals/definitions-of-software-testing/

10 Responses

This really sums it up beautifully, and I can’t wait to read the next post.

Thank you.

“Testing isn’t ‘just’ about finding bugs. It’s also about discovering different kinds of information that enables us to identify risks. That information feeds into better decision making to mitigate those risks.”

I like this way of thinking of testing than confirm its working according to some requirements. A critical eye to uncover risks is our unique value added in a team, IMHO.

Thank you Janet and look forward to reading the next one.

I do think the critical thinking skills are useful in so many ways. I’m happy you do too.

I love to be a QA…question askers. When I become a QA, I learn a lot and I add value to product and I invite to team to think about it. I believe, to be a QA is an awesome skill.

I thank Pete Walen for that phrase every time I use it. It is so powerful I think.

software testing company in India

Thank u for sharing such a helpful information about Testing Activities

very useful information mainly for testers as beginners

Thanks Janet for this great series of articles! Found them very insightful.

Regarding the quadrants, I had a thought on the left side. You mention tests run ‘before the code is written’ for Quadrants 1 (technology-facing) and 2 (business-facing). My understanding is while the test cases for these quadrants are indeed often defined early, sometimes even before coding begins, they are typically run by developers as the code is being written or immediately after. This provides that instant feedback to “support the team” (even if ran locally).

In contrast, the tests on the right side of the quadrant will most likely require a fully integrated and functioning system to be ran.

Thanks again for these knowledge gems!

I’m glad you like the series. Yes, quadrant 1 is really all about the unit tests – and hopefully the programmers are practicing TDD writing the tests before they write code. Quadrant 2 tests can be written by anyone, including testers or product owner type people. The real power is the discussion that happens around those tests – Is this really how we expect this to work. And you are also correct about the right side – the tests usually run on a full system.

as a side note, Lisa and I wrote a book called “Holistic Testing” to try to encapsulate all these ideas in one place.

Thanks for your reply Janet.

I guess my comment was more about the phrasing around “run early – before the code is written.” I was expecting the emphasis to be more on “written early” rather than actually run before the code exists, since, in practice, those tests (especially in Q1 and Q2) are usually run as code is being developed, not before any code exists.

But your reply helps clarify the intent. And absolutely, your book is on my radar – also your course about Holistic Testing 🙂

Thanks again!