At Agile 2010, there were about 20 of us at the AA-FTT (Agile Alliance Functional Test Tools) workshop. The emphasis was on “the state of the practice” of Acceptance Test Driven Development (ATDD). In one of the breakout sessions, we had an interesting discussion on what we actually meant by ATDD, which made me think a lot about the practice itself. Although we all knew that acceptance tests defined by the customer / business expert is what drives the TDD (test driven development) process, there were discrepancies in how each of us thought of full process.

JB Rainsberger had considered ATDD as two concentric circles with TDD, the developer practice of Test Driven Development, to be the center, with ATDD surrounding it. I felt his view of the practice was from a developer perspective – give me an example, and I will start coding. Many of us at the table thought it was more than that; it included the collaboration necessary to get the examples. Based on that discussion, the next question asked by JB was, “Then, what’s the difference between ATDD and BDD?”

Part of the problem is that we don’t all use the same vocabulary relating to the practice. I started by writing down all the words I know are used in conjunction with the practice. The following words and phrases are some of the ones I could think of:

- Examples, scenarios, acceptance tests, customer tests, behaviour, business-facing tests, story tests, functional tests, acceptance criteria, conditions of satisfaction, business rules, executable specification

There have been many explanations about ATDD and I’ve included a couple here to help explain.

Jennitta Andrea wrote a short article for Iterations about what ATDD is, and why teams should be doing it. She described ATDD as:

“

the practice of expressing functional story requirements as concrete examples or expectations prior to story development. During story development, a collaborative workflow occurs in which: examples written and then automated; granular automated unit tests are developed; and the system code is written and integrated with the rest of the running, tested software. The story is “done”—deemed ready for exploratory and other testing—when these scope-defining automated checks pass”

The full article is at: http://www.stickyminds.com/Media/eNewsLetters/Iterations

A couple of years ago, Elisabeth Hendrickson used an example to describe ATDD in her blog: http://testobsessed.com/2008/12/08/acceptance-test-driven-development-atdd-an-overview/

As a result of the AA-FTT workshop, Declan Whelan, Gojko Adzic and a few others came up with a diagram trying to get a common understanding around Specification by Example. It is on Google Docs – http://tinyurl.com/3x52ap9

Dan North’s description of Behaviour Driven Development (BDD) http://blog.dannorth.net/introducing-bdd/ is a bit more complicated, but I interpret it as using the word ‘behaviour’ rather than ‘test’ , and use the “Given, When, Then” guidelines to describe the precondition, trigger and post-condition. In a recent agile testing yahoo post, Liz Keogh posted this:

“BDD’s focus is on the discovery of stuff we didn’t know about, particularly around the contexts in which scenarios or examples take place. This is where using words like “should” and “behaviour” comes in, rather than “test” – because for most people “test” presupposes that we know what the behaviour ought to be. “Should” lets us ask, “Should it? Really? Is there a context which we’re missing in which it behaves differently?”

Where we choose to call it BDD or ATDD or Specification by Example, we want the same result – a shared common understanding of what is to be built to try to build the ‘thing’ right the first time. We know it never will be, but the less rework, the better.

Using business rules, use cases, business expertise (customers, Business Analysts, domain experts, etc), the development team’s (programmers, testers, DB guys, etc.) expertise, and any other supporting information, we can describe what we expect the software to do in the format of examples which are just a specialized test. In workflow type applications, describing behaviour may be applicable. If it is calculation heavy, then a spreadsheet type test format with specific examples might be more appropriate.

Do these tests have to be automated first? I encourage new teams that are struggling to find the right tool, to focus on the purpose of ATDD which is to drive development to deliver the right ‘thing’. If we can do that with a sentence, let’s do that first. We do have to automate them at sometime during the iteration, but until a team has their rhythm or cadence, the automation may not come first. Once you have the automation, you then have documentation that is up to date and describes the system behaviour.

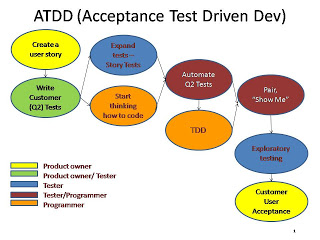

The picture I use to describe ATDD for new teams is:

I think the story isn’t ‘Done’ until the customer has actually validated the actual completed story which includes pairing with the developer after he’s completed coding for a first look, and the exploratory testing.

I will continue to use the phrase Acceptance Test Driven Development (ATDD) unless the industry decides on a common vocabulary, because I find that business stakeholders not only understand how to give examples, but also understand when I talk about acceptance tests that prove the intent of the story or feature. Once we have that, the team knows enough about the scope to start coding and testing. That’s what’s important.

10 Responses

Awesome post, Janet. I hope this starts a conversation between the BDD and ATDD communities. Terminology divergence aside, I hope all of us like-minded green-bar-addicts can learn to speak with one voice as a culture.

Cool Post. I like this very much.

Great stuff, Janet.

I don't think we'll ever get down to one vocabulary for all this. And I don't think we really want to–the variations from one group of people to another is valuable.

I came across your diagram via http://happytesting.wordpress.com/2012/01/05/elaborating-on-det-etdd-evolving/ which extends it slightly.

I noticed a very short step from Create A User Story to Write Customer Tests, and I've found (as I'm sure you have) that there's more to it than that. Generating and discussing examples to illustrate the desired behavior of the User Story is generally necessary to make that step. Without that, the transfer of ideas from the story writer to the test writer is generally rather uncertain.

I talk a bit about this in my Three Amigos article (http://manage.techwell.com/articles/weekly/three-amigos).

Thanks George. I've been looking to evolve the diagram without making it too complicated and keep going back to this simplistic one. I saw the blog post for the happy tester and it gave me some ideas too.

Thanks for the link to your 3 amigos article – I will use it for quoting. Lisa and I call that the Power of 3 and I believe it is critical for success.

Janet, ultimately I think the process is too varied and rich for a simple diagram to do it justice. I think it will take a series of simple diagrams, each illustrating some part of it.

It's so cool to see people you know and respect really thinking out this stuff as it evolves. Janet, let me know if you want to pair on the next iteration of your diagram.

Really interesting article. Makes me wonder why I wrote a 3 Amigos blog post though!! http://rthewitt.com/2013/02/07/the-3-amigos-ba-qa-and-developer/

I don't usually post comments from anonymous, but you left a link to your blog 'Agile Analyst'. I think it is important to articulate your understanding of concepts so keep blogging. I think you may have formalized the concept a bit too much, and if you haven't already, I suggest you read George Dinwiddie's blog on the 3 amigos http://manage.techwell.com/articles/weekly/three-amigos. He is the originator of this term.

I use the term 'power of 3' to mean basically the same thing, but in a much less formal way. Anytime a story is discussed, make sure you have at least 3 different perspectives present (domain, programming, and test).

Thank you for this great post, which points out that this approach (whether we call it ATDD, BDD, SbE, or any other) is strongly related with stakeholders defining the product collaboratively. In my opinion testing is just a tool to keep the resulting specification and the application in sync. Therefore I like to start describing the agreed behavior in a natural language to make it readable by many stakeholders. When it comes to test automation my preference is on Concordion, as it allows to write documentation in plain English: http://concordion.org

I think testing is more than “just” writing specifications, but it can be a very important part in the automation of regression checks. I have not had the opportunity to work with Concordion but readable tests are important to many organizations for living documentation.